Failed to Upload File Google Cloud Bucket

This page describes troubleshooting methods for mutual errors you may encounter while using Cloud Storage.

See the Google Deject Status Dashboard for information about regional or global incidents affecting Google Cloud services such as Cloud Storage.

Logging raw requests

When using tools such as gsutil or the Cloud Storage client libraries, much of the request and response information is handled by the tool. Nevertheless, it is sometimes useful to run across details to aid in troubleshooting. Use the following instructions to render request and response headers for your tool:

Console

Viewing request and response data depends on the browser you're using to access the Google Deject Console. For the Google Chrome browser:

-

Click Chrome's main carte du jour button (

).

). -

Select More Tools.

-

Click Programmer Tools.

-

In the pane that appears, click the Network tab.

gsutil

Use the global -D flag in your request. For example:

gsutil -D ls gs://my-bucket/my-object

Client libraries

C++

-

Prepare the environment variable

CLOUD_STORAGE_ENABLE_TRACING=httpto get the full HTTP traffic. -

Ready the environment variable CLOUD_STORAGE_ENABLE_CLOG=yes to get logging of each RPC.

C#

Add a logger via ApplicationContext.RegisterLogger, and set logging options on the HttpClient message handler. For more information, run into the FAQ entry.

Get

Set up the surround variable GODEBUG=http2debug=1. For more information, encounter the Go package net/http.

If you want to log the request body besides, use a custom HTTP client.

Java

-

Create a file named "logging.backdrop" with the following contents:

# Properties file which configures the operation of the JDK logging facility. # The system volition look for this config file to be specified as a organisation property: # -Djava.util.logging.config.file=${project_loc:googleplus-simple-cmdline-sample}/logging.properties # Set up the console handler (uncomment "level" to show more than fine-grained letters) handlers = java.util.logging.ConsoleHandler java.util.logging.ConsoleHandler.level = CONFIG # Gear up up logging of HTTP requests and responses (uncomment "level" to show) com.google.api.client.http.level = CONFIG -

Utilize logging.backdrop with Maven

mvn -Djava.util.logging.config.file=path/to/logging.backdrop insert_command

For more information, see Pluggable HTTP Ship.

Node.js

Gear up the environment variable NODE_DEBUG=https before calling the Node script.

PHP

Provide your own HTTP handler to the customer using httpHandler and gear up middleware to log the request and response.

Python

Use the logging module. For example:

import logging import http.customer logging.basicConfig(level=logging.DEBUG) http.client.HTTPConnection.debuglevel=5

Carmine

At the top of your .rb file after require "google/cloud/storage", add the following:

ruby Google::Apis.logger.level = Logger::DEBUG

Error codes

The post-obit are mutual HTTP condition codes you may come across.

301: Moved Permanently

Event: I'm setting up a static website, and accessing a directory path returns an empty object and a 301 HTTP response code.

Solution: If your browser downloads a zero byte object and you get a 301 HTTP response code when accessing a directory, such as http://www.example.com/dir/, your bucket almost likely contains an empty object of that name. To check that this is the case and ready the outcome:

- In the Google Cloud Panel, become to the Cloud Storage Browser page.

Become to Browser

- Click the Activate Cloud Beat out button at the top of the Google Cloud Panel.

- Run

gsutil ls -R gs://www.example.com/dir/. If the output includeshttp://www.example.com/dir/, you have an empty object at that location. - Remove the empty object with the command:

gsutil rm gs://www.instance.com/dir/

You tin can now admission http://world wide web.example.com/dir/ and have information technology return that directory's index.html file instead of the empty object.

400: Bad Request

Issue: While performing a resumable upload, I received this mistake and the bulletin Failed to parse Content-Range header.

Solution: The value you used in your Content-Range header is invalid. For example, Content-Range: */* is invalid and instead should be specified equally Content-Range: bytes */*. If you receive this error, your current resumable upload is no longer active, and you lot must beginning a new resumable upload.

Event: Requests to a public bucket directly, or via Cloud CDN, are declining with a HTTP 401: Unauthorized and an Authentication Required response.

Solution: Check that your customer, or any intermediate proxy, is not calculation an Authorization header to requests to Cloud Storage. Any asking with an Say-so header, even if empty, is validated equally if it were an authentication attempt.

403: Business relationship Disabled

Issue: I tried to create a bucket but got a 403 Account Disabled fault.

Solution: This error indicates that you have not yet turned on billing for the associated projection. For steps for enabling billing, see Enable billing for a projection.

If billing is turned on and you go along to receive this mistake message, you tin can reach out to support with your project ID and a description of your problem.

403: Access Denied

Issue: I tried to list the objects in my bucket but got a 403 Access Denied error and/or a message similar to Bearding caller does not have storage.objects.list access.

Solution: Check that your credentials are right. For instance, if you lot are using gsutil, bank check that the credentials stored in your .boto file are accurate. Too, confirm that gsutil is using the .boto file you expect by using the control gsutil version -l and checking the config path(s) entry.

Bold you are using the correct credentials, are your requests being routed through a proxy, using HTTP (instead of HTTPS)? If then, cheque whether your proxy is configured to remove the Authorization header from such requests. If so, make sure yous are using HTTPS instead of HTTP for your requests.

403: Forbidden

Result: I am downloading my public content from storage.deject.google.com, and I receive a 403: Forbidden mistake when I utilize the browser to navigate to the public object:

https://storage.cloud.google.com/BUCKET_NAME/OBJECT_NAME

Solution: Using storage.cloud.google.com to download objects is known every bit authenticated browser downloads; it ever uses cookie-based authentication, even when objects are made publicly attainable to allUsers. If you accept configured Data Access logs in Cloud Audit Logs to rail access to objects, one of the restrictions of that feature is that authenticated browser downloads cannot be used to access the afflicted objects; attempting to practise so results in a 403 response.

To avoid this consequence, practise one of the following:

- Use direct API calls, which support unauthenticated downloads, instead of using authenticated browser downloads.

- Disable the Deject Storage Data Access logs that are tracking access to the afflicted objects. Be aware that Data Access logs are prepare at or above the project level and tin can be enabled simultaneously at multiple levels.

- Prepare Information Access log exemptions to exclude specific users from Information Access log tracking, which allows those users to perform authenticated browser downloads.

409: Conflict

Issue: I tried to create a bucket but received the following fault:

409 Disharmonize. Lamentable, that proper name is not available. Please endeavour a dissimilar one.

Solution: The saucepan name you tried to utilise (e.g. gs://cats or gs://dogs) is already taken. Cloud Storage has a global namespace then you may not proper name a saucepan with the same proper noun as an existing bucket. Choose a proper noun that is not being used.

429: Too Many Requests

Issue: My requests are existence rejected with a 429 Too Many Requests mistake.

Solution: You lot are hitting a limit to the number of requests Cloud Storage allows for a given resource. Meet the Cloud Storage quotas for a discussion of limits in Cloud Storage. If your workload consists of 1000's of requests per 2nd to a bucket, see Request rate and admission distribution guidelines for a discussion of best practices, including ramping up your workload gradually and avoiding sequential filenames.

Diagnosing Google Cloud Console errors

Consequence: When using the Google Cloud Console to perform an operation, I go a generic fault message. For case, I see an fault message when trying to delete a bucket, but I don't see details for why the functioning failed.

Solution: Utilize the Google Deject Panel's notifications to see detailed data about the failed operation:

-

Click the Notifications button in the Google Cloud Console header.

A dropdown displays the most recent operations performed past the Google Cloud Console.

-

Click the item you want to find out more nigh.

A page opens up and displays detailed data about the operation.

-

Click on each row to expand the detailed error information.

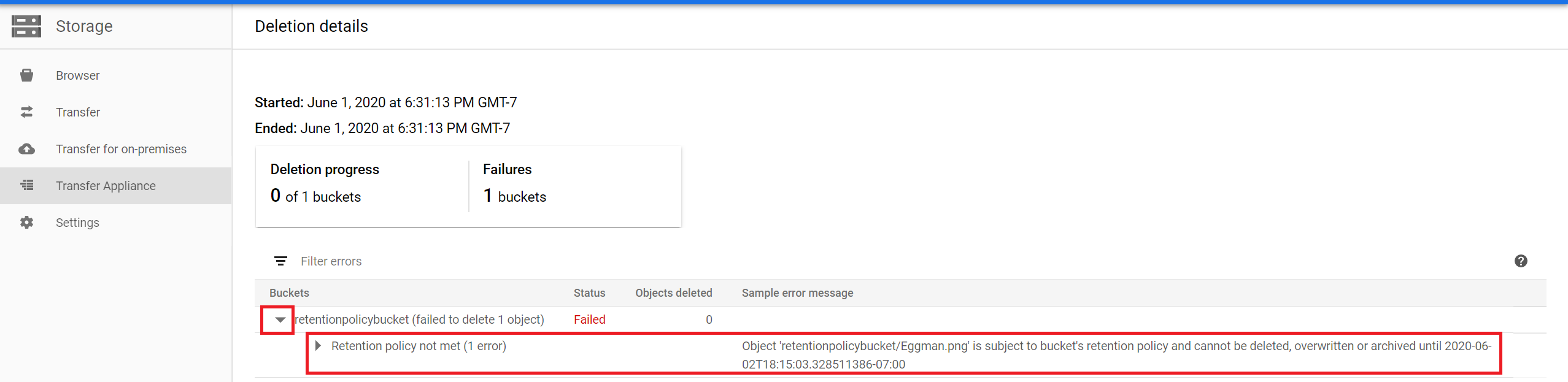

Beneath is an case of error information for a failed bucket deletion operation, which explains that a bucket retention policy prevented the deletion of the bucket.

gsutil errors

The post-obit are common gsutil errors you lot may encounter.

gsutil stat

Issue: I tried to use the gsutil stat command to brandish object condition for a subdirectory and got an error.

Solution: Cloud Storage uses a flat namespace to store objects in buckets. While y'all can utilize slashes ("/") in object names to make information technology announced every bit if objects are in a hierarchical construction, the gsutil stat command treats a trailing slash as part of the object name.

For example, if you run the command gsutil -q stat gs://my-bucket/my-object/, gsutil looks up information about the object my-object/ (with a trailing slash), equally opposed to operating on objects nested under my-bucket/my-object/. Unless y'all really take an object with that name, the operation fails.

For subdirectory listing, use the gsutil ls instead.

gcloud auth

Outcome: I tried to authenticate gsutil using the gcloud auth command, but I yet cannot admission my buckets or objects.

Solution: Your system may have both the stand-alone and Google Deject CLI versions of gsutil installed on information technology. Run the control gsutil version -l and cheque the value for using deject sdk. If False, your arrangement is using the stand-alone version of gsutil when you run commands. You can either remove this version of gsutil from your system, or you tin authenticate using the gsutil config command.

Static website errors

The following are mutual issues that yous may meet when setting up a saucepan to host a static website.

HTTPS serving

Result: I want to serve my content over HTTPS without using a load balancer.

Solution: Yous can serve static content through HTTPS using direct URIs such every bit https://storage.googleapis.com/my-bucket/my-object. For other options to serve your content through a custom domain over SSL, y'all can:

- Utilize a tertiary-political party Content Delivery Network with Deject Storage.

- Serve your static website content from Firebase Hosting instead of Cloud Storage.

Domain verification

Issue: I can't verify my domain.

Solution: Unremarkably, the verification procedure in Search Console directs you to upload a file to your domain, but you lot may not have a mode to practice this without first having an associated bucket, which you can merely create after you lot accept performed domain verification.

In this case, verify ownership using the Domain name provider verification method. See Buying verification for steps to achieve this. This verification tin be done before the bucket is created.

Inaccessible page

Outcome: I become an Access denied error bulletin for a web page served by my website.

Solution: Bank check that the object is shared publicly. If it is not, encounter Making Data Public for instructions on how to do this.

If you previously uploaded and shared an object, merely so upload a new version of information technology, then you must reshare the object publicly. This is because the public permission is replaced with the new upload.

Permission update failed

Issue: I get an error when I effort to make my information public.

Solution: Make sure that you have the setIamPolicy permission for your object or bucket. This permission is granted, for example, in the Storage Admin role. If y'all have the setIamPolicy permission and y'all still get an fault, your bucket might be subject field to public admission prevention, which does not permit access to allUsers or allAuthenticatedUsers. Public access prevention might be assault the saucepan direct, or it might exist enforced through an organization policy that is ready at a higher level.

Content download

Upshot: I am prompted to download my page's content, instead of beingness able to view it in my browser.

Solution: If you specify a MainPageSuffix as an object that does not have a web content blazon, then instead of serving the page, site visitors are prompted to download the content. To resolve this outcome, update the content-blazon metadata entry to a suitable value, such as text/html. See Editing object metadata for instructions on how to do this.

Latency

The post-obit are common latency issues you might run across. In addition, the Google Cloud Status Dashboard provides information well-nigh regional or global incidents affecting Google Cloud services such as Cloud Storage.

Upload or download latency

Issue: I'm seeing increased latency when uploading or downloading.

Solution: Employ the gsutil perfdiag command to run functioning diagnostics from the affected environment. Consider the following mutual causes of upload and download latency:

-

CPU or retentiveness constraints: The affected surround'southward operating system should have tooling to measure out local resources consumption such every bit CPU usage and memory usage.

-

Disk IO constraints: As part of the

gsutil perfdiagcommand, use therthru_fileandwthru_filetests to gauge the performance impact caused by local deejay IO. -

Geographical distance: Performance can exist impacted past the physical separation of your Deject Storage bucket and affected environs, particularly in cross-continental cases. Testing with a bucket located in the same region as your afflicted environment can identify the extent to which geographic separation is contributing to your latency.

- If applicable, the affected environs's DNS resolver should use the EDNS(0) protocol so that requests from the surround are routed through an appropriate Google Forepart End.

gsutil or client library latency

Issue: I'm seeing increased latency when accessing Cloud Storage with gsutil or one of the client libraries.

Solution: Both gsutil and client libraries automatically retry requests when information technology'due south useful to do so, and this behavior tin can finer increase latency as seen from the finish user. Use the Deject Monitoring metric storage.googleapis.com/api/request_count to come across if Deject Storage is consistenty serving a retryable response code, such equally 429 or 5xx.

Proxy servers

Issue: I'grand connecting through a proxy server. What do I need to practise?

Solution: To access Cloud Storage through a proxy server, you must allow access to these domains:

-

accounts.google.comfor creating OAuth2 authentication tokens viagsutil config -

oauth2.googleapis.comfor performing OAuth2 token exchanges -

*.googleapis.comfor storage requests

If your proxy server or security policy doesn't back up whitelisting by domain and instead requires whitelisting by IP network block, we strongly recommend that you lot configure your proxy server for all Google IP accost ranges. You lot can find the address ranges by querying WHOIS information at ARIN. Every bit a best practice, you should periodically review your proxy settings to ensure they match Google's IP addresses.

We do not recommend configuring your proxy with individual IP addresses you obtain from one-time lookups of oauth2.googleapis.com and storage.googleapis.com. Because Google services are exposed via DNS names that map to a large number of IP addresses that can change over time, configuring your proxy based on a 1-fourth dimension lookup may lead to failures to connect to Cloud Storage.

If your requests are being routed through a proxy server, you may need to check with your network administrator to ensure that the Authorization header containing your credentials is not stripped out by the proxy. Without the Authorization header, your requests are rejected and you receive a MissingSecurityHeader error.

What'south adjacent

- Learn about your support options.

- Find answers to additional questions in the Cloud Storage FAQ.

- Explore how Fault Reporting can help y'all identify and understand your Cloud Storage errors.

Source: https://cloud.google.com/storage/docs/troubleshooting

0 Response to "Failed to Upload File Google Cloud Bucket"

Post a Comment